An Analysis of Artificial Intelligence in the Internet of Behaviors

This article provides a comprehensive analysis of the symbiotic relationship between AI and IoB, examining its technological underpinnings, cross-sector applications, and the profound societal, ethical, and regulatory challenges it presents.

The emergence of the Internet of Behaviors (IoB) represents a paradigm shift in the digital ecosystem, moving beyond the simple connectivity of devices to the sophisticated analysis and influencing of human actions. Powered by Artificial Intelligence (AI), IoB leverages the vast data streams generated by the Internet of Things (IoT) to build detailed psychological and behavioral profiles of individuals. This report provides a comprehensive analysis of the symbiotic relationship between AI and IoB, examining its technological underpinnings, cross-sector applications, and the profound societal, ethical, and regulatory challenges it presents.

The analysis finds that AI is the indispensable engine of IoB, transforming raw data from sensors and digital interactions into predictive, actionable intelligence. Through machine learning techniques such as pattern recognition, anomaly detection, and predictive analytics, AI enables systems to not only understand past behavior but to anticipate and proactively shape future actions. This capability is driving transformative innovations across key sectors. In commerce, it fuels hyper-personalized marketing and customer experiences. In healthcare, the "Internet of Bodies" facilitates remote patient monitoring and proactive wellness interventions. In the public sector, it underpins smart city management and informs data-driven policy.

However, this transformative potential is matched by critical risks. The IoB ecosystem inherits and amplifies the cybersecurity vulnerabilities of IoT, creating an expanded attack surface where breaches can expose highly intimate behavioral data. This pervasive data collection raises significant privacy concerns, challenging principles of data minimization, transparency, and informed consent. Most critically, the report identifies profound ethical dilemmas surrounding behavioral manipulation, algorithmic bias, and the erosion of individual autonomy. The IoB framework creates a significant power asymmetry between the institutions deploying the technology and the individuals being analyzed, a dynamic that current regulatory frameworks are not fully equipped to address.

Existing data protection laws, such as the EU's General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA), provide a foundational but incomplete governance model. These regulations are primarily focused on governing the inputs of the data lifecycle—collection, consent, and storage. The unique challenge of IoB, however, lies in its outputs: the behavioral predictions and algorithmic nudges designed to influence human choice. This creates a regulatory gap that requires a new focus on algorithmic accountability and the ethics of influence.

Looking forward, the IoB is projected to see widespread adoption, converging with other advanced technologies like agentic AI and spatial computing to create ever more sophisticated systems of behavioral analysis and management. The long-term societal impact could be the creation of a "choice architecture" at a societal scale, an environment where individual and collective options are persistently and subtly shaped by algorithms.

This report concludes with strategic recommendations for policymakers and business leaders. Policymakers are urged to move beyond data privacy to develop frameworks for algorithmic accountability, establish clear prohibitions on manipulative uses of IoB, and empower users with meaningful control. Businesses are advised to embed ethical principles and robust cybersecurity into their IoB systems from the ground up and to foster trust through radical transparency. Ultimately, navigating the future of IoB requires a proactive, multi-stakeholder commitment to ensuring that this powerful technology is harnessed to serve human values and dignity, not to subordinate them.

I. The Emergence of the Internet of Behaviors: From Connected Things to Analyzed Actions

The technological landscape is undergoing a fundamental reorientation. For the past decade, the dominant paradigm has been the Internet of Things (IoT), a sprawling network of connected devices focused on gathering data about the state of the physical world. A new, more consequential paradigm is now emerging: the Internet of Behaviors (IoB). The IoB does not represent a new category of technology but rather a strategic convergence of existing capabilities—technology, data analytics, and behavioral science—that re-contextualizes the purpose of data collection itself. It marks a deliberate evolution from monitoring connected things to analyzing and influencing the actions of the people who use them. This section defines the IoB, traces its lineage from the IoT, and establishes the core principles that underpin its architecture and intent.

1.1 Defining the Internet of Behaviors (IoB)

The Internet of Behaviors is formally defined as a network of interconnected physical and digital objects that collect and exchange information over the Internet, linking this data to specific human behaviors, which can be either directly measured or inferred. This concept gained prominence when the technology consulting firm Gartner highlighted it as one of its Top Strategic Technology Trends for 2021, signaling its growing importance in both commercial and public sectors.

The term itself was first coined in 2012 by Professor Gote Nyman, who envisioned a network where distinct behavioral patterns could be assigned a unique "IoB address," much like a device is assigned an IP address in the IoT. Nyman's original concept underscored a critical ambition: to make human behavior a quantifiable, addressable, and ultimately programmable data point. While the technical implementation has evolved, this core intent remains. The primary objective of modern IoB systems is to address how the vast quantities of collected data can be interpreted from a human psychological and sociological perspective. The ultimate goal is to apply this understanding to influence or actively change human behavior to achieve specific outcomes, ranging from commercial interests, such as increasing sales, to public policy goals, like improving public health compliance.

It is crucial to recognize that IoB is not an entirely new phenomenon but rather a holistic integration and scaling of existing practices. Behavioral-targeted advertising, which tracks online activity to serve personalized ads, and the use of Bluetooth and Wi-Fi signals in retail environments to infer shopper traffic patterns are early, fragmented forms of IoB. The modern IoB framework integrates these and many other technologies into a comprehensive system capable of following and analyzing an individual's life and behaviors across a multitude of physical and digital interactions.

1.2 The Evolution from IoT to IoB

The relationship between the Internet of Things and the Internet of Behaviors is hierarchical and sequential. The IoT serves as the foundational data collection layer, while the IoB is the analytical and application layer that gives that data meaning and purpose in a human context.

The IoT comprises the tens of billions of internet-connected devices that permeate modern life—from consumer products like smartwatches, home assistants, and connected vehicles to industrial sensors and municipal infrastructure. The primary function of this network is to capture raw data from the physical and digital worlds and convert it into structured information. For example, a smart thermostat reports temperature data, a GPS tracker provides location coordinates, and a fitness band measures heart rate. In this stage, the data is descriptive; it reports on the state of an object or a physiological metric.

The IoB is the essential next step that extends the value of this information. It takes the raw data generated by the IoT and contextualizes it by attaching it to specific human behaviors. The information from the IoT is the "what"; the IoB seeks to understand the "why" and then influence the "what next." For instance:

An IoT-enabled vehicle provides data on speed, acceleration, and location. The IoB framework analyzes these data points to infer a pattern of "aggressive driving" or to map a "daily commute route".

An IoT-enabled smartwatch collects biometric data. The IoB interprets a sustained high heart rate combined with low activity as a potential health risk, triggering an alert.

An IoT-enabled point-of-sale system records a transaction. The IoB links this purchase to a user's online browsing history and location data to build a comprehensive consumer profile.

This process can be conceptualized as a continuous feedback loop. A user interacts with an IoT-enabled device, which generates a stream of data. This data is captured and processed by applications, which then feed it into an IoB analytics engine. The engine derives behavioral insights and uses them to trigger a responsive action—such as a personalized advertisement, a health recommendation, or a price adjustment—which in turn influences the user's next behavior. This dynamic transforms the relationship between data and action. As one analysis aptly describes, "the IoT surely converts data to information but the IoB translates knowledge into real wisdom". This "wisdom" is the capacity to not only understand but also to shape human action, representing a significant escalation in the power and purpose of data technology.

1.3 The Three Pillars of IoB

The Internet of Behaviors is not a singular technology but a multidisciplinary framework built upon the convergence of three distinct but interdependent fields. Understanding these pillars is essential to grasping both the capabilities and the complexities of IoB.

Technology: This pillar encompasses the physical and digital infrastructure that enables the entire process. On the hardware side, it includes the vast array of IoT sensors, wearables, smartphones, smart cameras, and location-tracking devices that serve as the sensory organs of the IoB. On the software side, it includes the networking protocols, cloud computing platforms, and data storage solutions required to handle the immense volume, velocity, and variety of behavioral data.

Data Analytics: This is the cognitive core of the IoB, where raw data is transformed into insight. This pillar is overwhelmingly powered by Big Data technologies and, most critically, Artificial Intelligence (AI) and Machine Learning (ML). AI algorithms are essential for sifting through massive datasets to identify subtle patterns, correlate disparate data streams, and generate the predictive models that are the hallmark of IoB systems.

Behavioral Science: This pillar provides the theoretical lens through which the data is interpreted and the strategic framework for influencing action. Drawing from psychology, sociology, and economics, behavioral science offers insights into cognitive biases, decision-making heuristics, and motivational triggers. IoB systems use these principles to design effective interventions—often called "nudges"—that are calculated to steer individuals toward a desired behavior, whether it is making a purchase, adopting a healthier lifestyle, or complying with a public safety protocol.

The fusion of these three pillars is what gives the IoB its unique power. Technology gathers the data, data analytics finds the patterns, and behavioral science explains what those patterns mean and how to act upon them. This integrated approach allows for the creation of systems that can understand human behavior at an unprecedented scale and granularity, and then use that understanding to actively shape it.

The Engine of IoB: Artificial Intelligence and Behavioral Analytics

If the Internet of Behaviors is the framework for understanding and influencing human action, Artificial Intelligence (AI) is its indispensable engine. AI and its subfield, Machine Learning (ML), provide the computational power and analytical sophistication required to transform the chaotic torrent of data from IoT devices and digital interactions into structured, predictive, and actionable behavioral intelligence. Without the capabilities of modern AI, the grand vision of the IoB—a system that learns, predicts, and adapts in real time—would remain theoretical. The relationship is symbiotic: the IoT and our digital lives provide the massive datasets needed to train powerful AI models, and in turn, AI provides the analytical horsepower to unlock the value hidden within that data. This section dissects the critical functions of AI within the IoB, outlines the key technologies in the "Behavioral AI Stack," and details the specific machine learning techniques that power this new frontier of behavioral analytics.

2.1 AI as the Central Nervous System

AI functions as the central nervous system of the IoB, processing sensory input (data) and coordinating responses (behavioral interventions). Its primary role is to bring human-like learning and decision-making capabilities to the vast, interconnected IoB ecosystem, enabling the automation of both analysis and influence. While traditional analytics can describe past events, AI-powered systems can identify complex patterns that are imperceptible to human analysts, learn from new data without being explicitly reprogrammed, and make predictions about future outcomes.

This capability is what elevates IoB from a simple data aggregation platform to a proactive system. For example, in industrial settings, AI can analyze data from equipment sensors to predict machine failures before they occur, enabling predictive maintenance that saves significant costs. In a consumer context, AI can analyze browsing and purchase data to identify trends and anomalies, allowing businesses to make more informed decisions about marketing and product development. The core value proposition is the conversion of data into intelligent action. As one analysis notes, without AI-powered analytics, the data produced by IoT devices would have limited value; conversely, AI systems would struggle for relevance without the continuous stream of real-world data provided by the IoT.

2.2 The Behavioral AI Stack: From Data to Decision

The application of AI in IoB can be understood as a multi-layered process, or a "stack," that moves from raw data collection to sophisticated, real-time responses. This process is often referred to as Behavioral AI, a branch of artificial intelligence that specifically focuses on understanding, predicting, and responding to human behavior by combining AI techniques with behavioral science.

Behavioral Data Collection: The foundation of the stack is the continuous gathering of data from a multitude of sources. This includes explicit user actions such as website clicks, search queries, and purchase histories, as well as implicit data streams from IoT devices, including location data from smartphones, biometric readings from wearables, voice commands given to smart assistants, and even visual data from facial recognition systems.

Pattern Recognition and Predictive Analytics: This is the core analytical layer where AI models are trained on vast historical datasets to identify meaningful patterns and correlations. These models learn to recognize trends and establish "behavioral baselines"—a profile of an individual's or group's normal activity. Once a baseline is established, the system can engage in predictive analytics to anticipate future actions. A classic example is a streaming service like Netflix using a viewer's history to predict and recommend what they are likely to watch next.

Anomaly Detection: A direct and powerful application of pattern recognition is anomaly detection. By understanding what constitutes "normal" behavior for a user or a system, AI can instantly flag any activity that deviates significantly from that baseline. This is a cornerstone of modern cybersecurity, where it is used to identify potential security threats like fraudulent transactions, phishing attempts, or unauthorized account access. An unusual login location or an atypical data transfer can be flagged in real time as a potential breach.

Contextual Analysis and Sentiment Recognition: More advanced AI models, particularly those leveraging Natural Language Processing (NLP) and affective computing (or Emotion AI), add a layer of qualitative understanding. These systems go beyond tracking actions to interpret their context and the emotional state of the user. By analyzing the text of a review, the tone of a voice during a customer service call, or even subtle facial expressions captured by a camera, these systems can gauge sentiment (e.g., frustration, satisfaction). This allows for more nuanced and empathetic responses, such as a chatbot adjusting its tone based on a user's perceived frustration.

Dynamic Adaptation and Real-Time Response: The pinnacle of the Behavioral AI stack is its ability to learn and adapt continuously. As new data flows into the system, the AI models are constantly updated, refining their understanding of behavior and improving their predictive accuracy. This enables real-time adaptation, where the system's response is instantly tailored to the user's current actions. An adaptive learning platform like Duolingo, which adjusts the difficulty of lessons based on a student's real-time progress and mistakes, is a prime example of this dynamic capability.

2.3 Key AI/ML Techniques in Behavioral Analytics

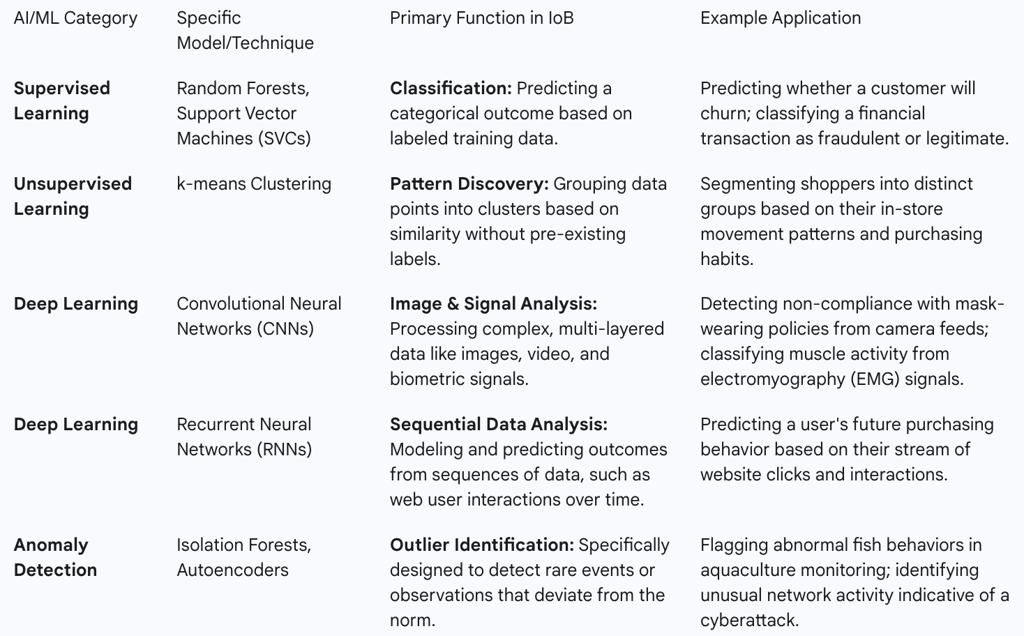

The functions described in the Behavioral AI stack are powered by a diverse set of specific machine learning and deep learning models. Understanding these techniques is crucial for appreciating the technical capabilities and limitations of IoB systems.

The selection of a particular technique depends on the specific problem being addressed—from classifying known behaviors to discovering new ones. The increasing sophistication of these models, particularly in the realm of deep learning, is what enables the IoB to analyze increasingly complex and nuanced forms of human behavior, such as emotion and intent, with growing accuracy. This technical evolution transforms the relationship between organizations and individuals from a reactive, transactional one into a continuously managed, proactive, and predictive one. The system is not merely a passive observer of past events; it is an active participant, constantly running simulations of an individual's future self and intervening with targeted "nudges" designed to guide them toward a predetermined outcome.

III. Sectoral Transformation: Applications and Use Cases of AI-Powered IoB

The convergence of AI and the Internet of Behaviors is not a future-state concept; it is an active force reshaping industries today. By providing an unprecedented ability to understand, predict, and influence human action, IoB is becoming a critical tool for enhancing efficiency, personalizing services, and creating new value propositions. Its applications span the commercial, public, and private sectors, demonstrating a transformative potential that is both broad and deep. This section provides a comprehensive survey of IoB's real-world and emerging use cases, detailing how behavioral data is being leveraged to drive specific outcomes in key domains.

3.1 Commerce and Marketing: The Hyper-Personalization Engine

The commercial sector, particularly retail and marketing, has been one of the earliest and most aggressive adopters of IoB principles. For these industries, understanding consumer behavior is paramount, and IoB provides the tools to do so at a scale and granularity previously unimaginable.

Behavioral Targeting and Personalized Advertising: IoB represents a significant evolution from traditional demographic-based marketing. It enables hyper-personalization by analyzing a rich tapestry of real-time behavioral data, including online browsing history, social media interactions, search queries, physical location, and even biometric data from wearable devices. This allows advertisers to craft highly relevant and timely campaigns that resonate with an individual's current context and inferred interests, thereby increasing engagement and conversion rates. Technology giants like Google and Amazon are pioneers in this space, using sophisticated IoB-driven algorithms to power their recommendation engines and targeted advertising ecosystems, which analyze everything from past purchases to the amount of time a user spends viewing a product page.

Customer Journey Analysis: IoB provides businesses with a holistic view of the entire customer journey. By integrating data from multiple touchpoints—from the initial social media ad that sparked interest, to the in-store visit tracked by beacons, to the final online purchase—companies can map out the complex path a consumer takes and identify the key factors that influence their decisions. This deep understanding allows for the optimization of each stage of the value chain and the creation of more effective customer interaction strategies.

Enhanced Customer Experience (CX): The insights gleaned from IoB are used not only for marketing but also for improving the overall customer experience. By analyzing how users interact with a website or product, companies can optimize user interface (UX) design to be more intuitive and efficient. In physical retail, data on shopper movement can inform store layout and product placement. Furthermore, IoB enables real-time interventions, such as point-of-sale (POS) notifications with targeted offers or proactive customer support, which can resolve issues faster and build stronger brand loyalty.

3.2 Healthcare and the Internet of Bodies (IoB): Proactive and Personalized Wellness

In healthcare, the application of IoB is often referred to as the "Internet of Bodies," a network of devices that are worn, implanted, or ingested to monitor and interact with the human body. This domain represents one of the most promising and personal applications of IoB, shifting the focus of medicine from reactive treatment to proactive and continuous wellness management.

Remote Patient Monitoring (RPM): This is a cornerstone application of the Internet of Bodies. Wearable sensors like smartwatches and fitness trackers, along with implantable medical devices such as smart pacemakers, continuous glucose monitors, and digital pills, collect a constant stream of physiological data. AI algorithms analyze this real-time data to track vital signs, physical activity, sleep quality, and medication adherence. This continuous monitoring is particularly valuable for managing chronic conditions like heart disease and diabetes, as the system can detect subtle irregularities or deviations from a patient's baseline and trigger automated alerts to both the patient and their healthcare provider, enabling early intervention and potentially preventing acute health crises.

Improving Medication Adherence: Non-adherence to prescribed medication is a major challenge in healthcare. IoB addresses this through devices like smart pill bottles and connected inhalers that track usage. More importantly, the system can analyze patterns to infer

why a patient might be missing doses—whether due to simple forgetfulness, concerns about side effects, or a lack of motivation. Based on this inference, the system can deliver targeted behavioral nudges, such as personalized reminders via a smartphone app, educational content about the medication, or suggestions to link taking a pill with a daily routine like brushing teeth.

Mental Health Support: IoB is also being applied to mental wellness. By passively monitoring behavioral indicators that correlate with mental state—such as changes in sleep patterns, reduced social interaction (inferred from digital communication logs), or shifts in speech patterns and tone—AI systems can detect early warning signs of conditions like depression or anxiety. This can prompt timely and discreet interventions, connecting the individual with digital mental health tools, teletherapy services, or encouraging them to seek professional care.

Public Health and Safety: The utility of IoB in public health was starkly demonstrated during the COVID-19 pandemic. Organizations used computer vision systems to monitor compliance with mask-wearing mandates and deployed RFID tags at handwashing stations to track how often employees were sanitizing their hands. This data was used to provide real-time reminders and to analyze which behaviors were most effective at mitigating the spread of the virus, informing public health policies.

3.3 Public Sector, Smart Cities, and Social Services

Governments and municipalities are increasingly adopting IoB principles to improve the efficiency of public services, create more sustainable urban environments, and formulate more effective policies.

Smart City Management: IoB is a foundational technology for the smart city concept. By analyzing aggregated and anonymized behavioral data, cities can optimize essential services. For example, data from traffic sensors, GPS in vehicles, and public transit usage is used to predict traffic patterns, dynamically adjust traffic light timings to reduce congestion, and optimize bus and train schedules to match passenger demand. In another application, sensors in waste bins can signal when they are full, allowing for the optimization of collection routes, which saves fuel and reduces emissions. Similarly, monitoring real-time energy and water consumption patterns across a city can help manage demand and promote sustainability initiatives.

Data-Driven Public Policy: The field of "behavioral public administration" leverages insights from behavioral science and data analytics to design more effective policies. Governments can use IoB to gather real-time data on citizen behavior and public sentiment to understand how people will likely respond to a new regulation or program. This allows for the creation of evidence-based policies that "nudge" citizens toward desired outcomes, such as increasing retirement savings, encouraging water conservation, or improving compliance with tax laws.

High-Stakes Social Services: One of the most powerful and controversial applications of IoB principles is in social services, particularly child welfare. Some agencies are using predictive analytics tools that analyze historical administrative data—such as prior involvement with the justice system, substance abuse history, and housing instability—to generate a risk score for child maltreatment. These scores are intended to help social workers prioritize cases and allocate resources more effectively. However, this use case highlights the profound ethical challenges of using behavioral predictions to inform decisions that can lead to family separation, as the models can perpetuate existing racial and socioeconomic biases present in the data.

3.4 Finance, Insurance, and the Workplace

The principles of behavioral analysis are also being applied in the financial, insurance, and corporate sectors to manage risk, personalize products, and monitor performance.

Behavioral Risk Scoring: The financial industry is using IoB to enhance risk assessment. For fraud detection, AI systems establish a baseline of a customer's normal transaction behavior and can instantly flag anomalies—such as a purchase from an unusual location or a sudden large withdrawal—as potentially fraudulent. In lending, some firms are exploring how behavioral data, such as spending habits and bill payment consistency, can supplement traditional credit scores to create a more holistic and accurate assessment of creditworthiness.

Usage-Based Insurance (UBI): The insurance industry has been a prominent adopter of IoB. Vehicle telematics systems, which use sensors in a car or a smartphone app to track driving behaviors like speed, acceleration, braking patterns, and mileage, are a prime example. This data allows insurance companies to offer personalized premiums that directly reflect an individual's actual risk profile, rewarding safer drivers with lower costs.

Workplace Monitoring: Corporations are deploying IoB technologies to monitor employee behavior for productivity and safety. In logistics and warehousing, companies have patented wristbands that can track the location and hand movements of employees to identify inefficiencies. In industries like transportation and heavy manufacturing, sensors can be used to monitor operators for signs of fatigue or distraction, triggering alerts to prevent accidents.

Across all these sectors, a common thread emerges: behavioral data generated in one area of a person's life is increasingly being used to make decisions in another. Health data from a fitness app can influence an insurance premium. A driving route tracked for navigation can inform a retail marketing campaign. This cross-pollination of data creates new and complex "behavioral value chains," breaking down traditional data silos and, in the process, challenging long-held expectations of privacy and context. The true power of IoB lies not in its application within a single sector, but in its ability to aggregate and re-contextualize a person's behavior across the entirety of their digital and physical life.

IV. The Pandora's Box: Navigating the Security, Privacy, and Ethical Minefield

While the Internet of Behaviors promises a future of unprecedented efficiency, personalization, and convenience, its implementation opens a veritable Pandora's box of profound and complex risks. The IoB framework not only inherits the well-documented vulnerabilities of its foundational IoT layer but also amplifies them significantly by adding a layer of highly sensitive, personal, and psychologically potent behavioral data. The very capabilities that make IoB so powerful—its ability to monitor, analyze, and influence human action—are also what make it so dangerous. Navigating this landscape requires a clear-eyed assessment of the multifaceted risks across cybersecurity, individual privacy, and fundamental ethics.

4.1 Cybersecurity Vulnerabilities: An Expanded Attack Surface

The security posture of any IoB system is only as strong as its weakest link, and its foundation is built on notoriously insecure ground. The massive and heterogeneous network of IoT devices that collect behavioral data presents a vast and porous attack surface for malicious actors.

Inherited IoT Risks: IoB systems are fundamentally dependent on data from IoT devices, which are often designed with a focus on cost and convenience rather than security. Common, critical vulnerabilities include the use of weak or hardcoded default passwords, a lack of encryption for data both in transit and at rest, infrequent or nonexistent firmware updates to patch security holes, and insecure network services that leave devices exposed to the public internet. Each of these billions of devices—from a smart camera to a connected car—represents a potential entry point for an attacker to compromise not just the device itself, but the broader network and the sensitive data it transmits.

High-Value Data Targets: The data aggregated and processed within IoB systems is exceptionally valuable to cybercriminals. Unlike a breach of credit card numbers, which can be cancelled and reissued, a breach of behavioral data is a compromise of an individual's intimate patterns of life. A successful attack could expose a person's daily routines, locations, health conditions, financial habits, social connections, and psychological predispositions. This information can be sold on the dark web, used for identity theft, or leveraged for blackmail and extortion.

Weaponized Behavioral Insights: Perhaps the most insidious cybersecurity threat is the potential for attackers to use stolen behavioral data to craft hyper-personalized and devastatingly effective social engineering attacks. Armed with knowledge of an individual's habits, interests, and even their emotional state, an attacker can design phishing emails, text message scams, or fraudulent communications that are far more persuasive than generic attacks. For example, an attacker could use location data to time a fraudulent message to coincide with a target's arrival at a specific location, or use purchase history to create a fake but highly convincing "order confirmation" email containing malware.

Physical Harm and Bodily Threat: In the context of the Internet of Bodies, the consequences of a cyberattack transcend data theft and can manifest as direct physical harm. The internet connectivity of implanted medical devices like pacemakers, insulin pumps, and neurostimulators introduces the risk that a malicious actor could remotely tamper with their functioning. Security researchers have demonstrated the feasibility of such attacks, raising the chilling possibility that a hacker could manipulate a device to deliver an incorrect dosage of medication or disrupt a life-sustaining function, potentially causing severe injury or death.

4.2 Privacy Intrusions: The End of Anonymity?

The core function of IoB—the continuous collection and analysis of behavioral data—is fundamentally in tension with established principles of privacy. The scale and intimacy of the data collection required for IoB systems create a state of pervasive surveillance that challenges an individual's ability to control their personal information.

Pervasive Surveillance and Granular Profiling: IoB systems operate by constructing comprehensive and continuously updated profiles of individuals based on their actions. This mass collection of personal data, often aggregated from dozens of sources and processed by multiple organizations for various purposes, directly conflicts with foundational data protection principles such as data minimization (collecting only what is necessary) and purpose limitation (using data only for the purpose for which it was collected). The result is a digital dossier of a person's life, far more detailed than any traditional record.

Lack of Transparency and Meaningful Consent: A cornerstone of privacy is informed consent, yet the nature of IoB makes this exceedingly difficult to achieve. Data collection is often seamless and invisible, occurring in the background through devices like smart speakers or surveillance cameras. Many IoT devices lack screens or clear user interfaces, making it impossible to provide users with adequate notice about data practices or to offer them easy-to-use controls. As a result, users are frequently unaware of the full extent of the data being collected about them, who has access to it, and how it is being used, rendering the notion of "consent" largely meaningless.

Predictive Harm and Group Privacy: The privacy risks of IoB extend beyond the data that is explicitly collected. AI's ability to make inferences can lead to what is known as "predictive harm". An AI model might infer sensitive attributes—such as a person's sexual orientation, political affiliation, or an undiagnosed health condition—from seemingly innocuous data like their browsing history or social media "likes." Furthermore, IoB threatens not only individual privacy but also "group privacy." By analyzing aggregated data, AI can identify patterns associated with specific demographic groups, leading to stereotyping and collective discrimination, even if individual identities are anonymized.

4.3 Ethical Dilemmas: Manipulation, Bias, and Autonomy

Beyond the technical and legal challenges of security and privacy lie the most profound questions about the IoB's impact on society. These are the ethical dilemmas concerning the very nature of human autonomy, fairness, and the power to influence choice.

Behavioral Manipulation vs. Benign Nudging: The central ethical quandary of IoB is the blurred line between benevolent influence and malicious manipulation. While the technology can be used to "nudge" people toward positive behaviors, such as encouraging exercise or promoting safe driving, the same mechanisms can be used to exploit psychological vulnerabilities for commercial or political gain. This raises fundamental questions about an individual's autonomy and their right to make decisions free from pervasive, algorithmically-driven coercion. At what point does a personalized recommendation become a form of psychological manipulation that undermines free will?

Algorithmic Bias and Discrimination: IoB systems are powered by AI models, and these models are a reflection of the data they are trained on. If the historical data used for training contains existing societal biases related to race, gender, socioeconomic status, or other protected characteristics, the AI will learn, codify, and even amplify those biases in its decision-making. This can lead to deeply unfair and discriminatory outcomes. For example, a biased algorithm could systematically offer higher interest rates to individuals from minority neighborhoods, screen out qualified job applicants based on gender-coded language in their resumes, or, in the case of child welfare, disproportionately flag families of color for investigation.

Inaccuracy and Lack of Contestability: Inferences about human behavior are probabilistic, not certain, and can often be wrong. An algorithm might misinterpret a set of data points and draw an incorrect conclusion about a person's intentions or character. However, in an opaque IoB system, individuals may have no knowledge that such a judgment has been made and no meaningful process to appeal or correct the error. This lack of contestability is especially dangerous when these algorithmic judgments are used to make high-stakes decisions about access to credit, employment, insurance, or public services.

These risks collectively point to the creation of a new and profound form of power imbalance. The relationship between the entity deploying an IoB system—be it a corporation or a government—and the individual being monitored is inherently unequal. One side possesses vast data resources, opaque and complex AI models, and a sophisticated understanding of behavioral psychology. The other side, the individual, has limited visibility into how their data is being used, how the algorithms function, and the subtle ways in which their choices are being shaped. This "algorithmic power asymmetry" shifts agency from the individual to the institution, creating a system of governance and control that operates at a subconscious, data-driven level, with significant implications for personal freedom and democratic society.

Governance and Regulation in the Age of Behavioral Data

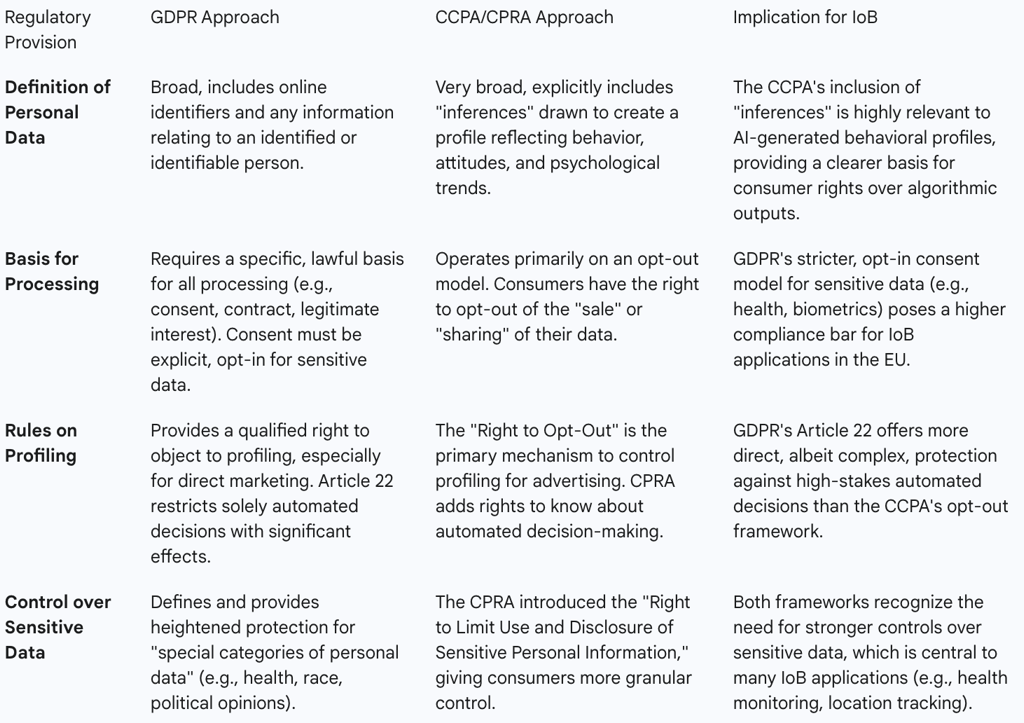

The rapid proliferation of the Internet of Behaviors presents a formidable challenge to existing legal and regulatory frameworks. While comprehensive data protection laws like the European Union's General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA) provide a crucial foundation for governance, they were primarily designed in an era focused on data collection and storage. The unique nature of IoB—with its emphasis on inferential analysis, predictive profiling, and active behavioral influence—strains the limits of these regulations and reveals significant governance gaps. This section analyzes the applicability and limitations of current legal paradigms in addressing the complex challenges posed by AI-powered behavioral data processing.

5.1 Applying Existing Frameworks: GDPR and IoB

The GDPR is arguably the world's most comprehensive data protection law, and its principles are highly relevant to the operation of IoB systems within the EU and for any organization processing the data of EU citizens. The regulation's broad definition of "personal data" as any information relating to an identifiable person comfortably includes the location, biometric, and activity data central to IoB.

IoB practices are subject to scrutiny under several core GDPR principles:

Lawfulness, Fairness, and Transparency: Article 5 of the GDPR mandates that data processing must have a clear lawful basis (such as explicit consent), be fair to the data subject, and be transparent. This presents a significant hurdle for IoB systems that rely on seamless, often invisible, data collection from devices with limited user interfaces, making it difficult to provide clear notice and obtain valid consent.

Purpose Limitation and Data Minimization: The GDPR requires that data be collected for "specified, explicit, and legitimate purposes" and be limited to what is "necessary" for those purposes. The operating model of many IoB systems, which involves collecting vast and diverse datasets to discover unforeseen correlations and build comprehensive behavioral profiles, is in direct tension with these principles.

Data Subject Rights: The GDPR grants individuals a suite of powerful rights, including the right to access their data, the right to rectification of inaccurate data, the right to erasure ("right to be forgotten"), and the right to object to processing, particularly for profiling related to direct marketing. While crucial, exercising these rights can be practically difficult within complex IoB ecosystems where data is aggregated, transformed, and shared across multiple entities.

Automated Decision-Making and Profiling: Article 22 of the GDPR provides specific protections for individuals against decisions based "solely on automated processing, including profiling, which produces legal effects concerning him or her or similarly significantly affects him or her." This provision is directly applicable to high-stakes IoB applications, such as algorithmic credit scoring or risk assessment in social services. It grants the data subject the right to obtain human intervention, to express their point of view, and to contest the decision. However, the scope of what constitutes a "significant effect" and the exceptions to this rule (e.g., when necessary for a contract or based on explicit consent) make its application complex and subject to legal debate.

5.2 Applying Existing Frameworks: CCPA and IoB

In the United States, the California Consumer Privacy Act (CCPA), as amended by the California Privacy Rights Act (CPRA), provides the most robust state-level data protection framework. It grants California residents a set of rights concerning their personal information collected by businesses that meet certain revenue or data processing thresholds.

The CCPA is particularly relevant to IoB due to its expansive definition of "personal information." Crucially, the law explicitly includes "inferences drawn from any of the information identified...to create a profile about a consumer reflecting the consumer's preferences, characteristics, psychological trends, predispositions, behavior, attitudes, intelligence, abilities, and aptitudes". This definition directly targets the analytical outputs of IoB systems, not just the raw data inputs.

Key rights under the CCPA that impact IoB include:

Right to Know: Consumers have the right to request that a business disclose the categories and specific pieces of personal information it has collected about them, the sources of that information, and the purposes for its collection and use.

Right to Delete: Consumers can request the deletion of their personal information held by a business, subject to certain exceptions.

Right to Opt-Out of Sale/Sharing: This is a cornerstone of the CCPA. It gives consumers the right to direct a business not to "sell" or "share" their personal information. The definitions of "sell" and "share" are broad and can encompass the data transfers that underpin the behavioral advertising ecosystem, a major application of IoB.

Right to Limit Use and Disclosure of Sensitive Personal Information: The CPRA introduced this right, allowing consumers to restrict the use of sensitive data (such as precise geolocation, health data, and biometric information) to only that which is necessary to provide the requested goods or services. This provides a powerful tool for individuals to limit some of the more intrusive data collection practices of IoB.

5.3 The Regulatory Gaps and Future Challenges

Despite the strengths of frameworks like GDPR and CCPA, they reveal significant gaps when confronted with the unique challenges of AI-powered IoB.

The Problem of "Inferred" Data and Algorithmic Outputs: While the CCPA commendably includes "inferences" in its definition of personal information, the legal status and ownership of these algorithmic outputs remain a gray area globally. Are a company's AI-generated predictions about a person's future health or creditworthiness the personal data of the individual, or are they the proprietary intellectual property of the company? Existing laws do not provide a clear answer, making it difficult for individuals to access, correct, or control the algorithmic judgments made about them.

The Illusion of "Meaningful Consent": The principle of informed consent, central to data protection law, is stretched to its breaking point by IoB. Given the complexity of machine learning models, it is often impossible for an organization to transparently explain how a user's data will be processed and for what specific purposes it might be used in the future, as the system is designed to learn and evolve. This opacity makes it nearly impossible for a user to give truly informed consent.

Addressing Algorithmic Harm: The primary focus of data protection laws is on the proper handling and security of data. They are less equipped to address the downstream societal harms that can arise from the use of that data, such as systemic discrimination caused by biased algorithms or the psychological harms of digital manipulation. Regulating the data is not the same as regulating the algorithm's impact.

This reveals a fundamental mismatch: current regulations are largely "input-focused," designed to govern the collection, consent, and storage phases of the data lifecycle. The unique power and risk of IoB, however, reside in its "outputs"—the behavioral predictions, psychological profiles, and targeted nudges it generates. A company could theoretically be in full compliance with all data collection and storage rules while simultaneously using that legally obtained data to build a manipulative system that exploits users' cognitive biases. The law effectively governs the ingredients but provides little oversight of the potentially toxic recipe. This points to a critical governance gap and the need for a new regulatory paradigm focused on "algorithmic accountability," fairness, and the ethics of influence, extending beyond the traditional confines of data privacy.

The Future Trajectory: Projections, Trends, and Societal Implications

The Internet of Behaviors is not a static concept but a rapidly evolving technological frontier. Its trajectory is shaped by exponential growth in data generation, continuous advancements in artificial intelligence, and increasing investment from both commercial and governmental entities. Projecting this trajectory reveals a future where the analysis and influencing of behavior become deeply integrated into the fabric of daily life, converging with other emerging technologies to create systems of unprecedented power and complexity. This section synthesizes market forecasts and expert commentary to outline the future of IoB and contemplate its profound long-term societal implications.

6.1 Market Growth and Widespread Adoption

Industry analysts project a rapid and pervasive adoption of IoB technologies in the coming years. Forecasts from Gartner have been particularly influential, predicting that by 2023, the individual activities of 40% of the global population would be digitally tracked with the intent to influence their behavior. Looking further ahead, the firm estimated that by the end of 2025, more than half of the world's population will be subject to at least one IoB program, whether from a commercial or governmental source.

This rapid adoption is driven by significant economic incentives. The global IoB market is forecast to experience substantial growth, expanding from $386 billion in 2022 to a projected $811 billion by 2032. This level of investment indicates a strong belief across industries that behavioral insights are a critical asset for gaining a competitive edge, improving operational efficiency, and creating new revenue streams.

6.2 Convergence with Emerging Technologies

The future impact of IoB will be magnified through its convergence with other transformative technologies. This technological fusion will enable more sophisticated, autonomous, and immersive forms of behavioral analysis and interaction.

Agentic AI: The current paradigm of AI often involves systems that provide predictions or recommendations for human decision-makers. The next evolution is toward "Agentic AI"—more autonomous AI systems capable of setting their own goals and taking actions in the digital and physical worlds to achieve them. When combined with IoB, this could lead to AI agents that act as personal managers, proactively making decisions and taking actions on a user's behalf based on a deep, continuous analysis of their behavior. For example, an AI agent could automatically adjust an investment portfolio, schedule medical appointments, and modify a connected home's environment to optimize for health and productivity, all based on IoB data streams.

Spatial Computing (AR/VR): The rise of spatial computing platforms, including augmented reality (AR) and virtual reality (VR) headsets, will create new and incredibly rich sources of behavioral data. These devices can capture not just what a user sees and does in an immersive environment, but also intimate biometric and behavioral cues such as eye-tracking, pupil dilation, emotional responses inferred from facial expressions, and physical gestures. The IoB will leverage this data to analyze user engagement and emotional resonance with unprecedented detail, opening new frontiers for entertainment, training, and marketing, but also raising acute privacy concerns.

Digital Twins: The engineering concept of a "digital twin"—a real-time virtual representation of a physical object or system—is being extended to humans. A personal digital twin would be a dynamic, virtual model of an individual, continuously updated with data from the Internet of Bodies and other IoB sources. This model could be used to simulate the effects of different medical treatments, predict the onset of disease, or test the impact of lifestyle changes with remarkable accuracy. While the potential benefits for personalized medicine are immense, the creation of such a comprehensive digital replica of a person represents the ultimate form of behavioral profiling.

6.3 Long-Term Societal Impact

The widespread integration of IoB into society has the potential to catalyze profound long-term shifts in social norms, power structures, and even our understanding of human identity.

The Quantified and Optimized Society: IoB dramatically accelerates the "quantified self" movement, where every aspect of life, from sleep to productivity to social interaction, is tracked and measured. On a societal scale, this can create immense pressure for optimization and conformity. Individuals may be judged not just on their actions, but on how their data-driven behavioral profiles align with algorithmically defined benchmarks of "health," "creditworthiness," or "productivity." This could lead to a society where deviation from the norm becomes more difficult and carries social or economic penalties.

Erosion of Trust and Social Cohesion: The awareness of pervasive surveillance and the potential for hidden algorithmic manipulation can lead to a significant erosion of public trust in both corporations and government institutions. If people feel that their choices are being constantly engineered for profit or control, it can foster cynicism and social friction. Furthermore, the amplification of bias through IoB algorithms could deepen existing social divides and institutionalize discrimination, further fraying the fabric of social cohesion.

Redefining Autonomy and Humanity: The most fundamental long-term implication of IoB, particularly its "Internet of Bodies" manifestation, is its challenge to our core concepts of what it means to be human. As technology gains the capacity to monitor, analyze, and even modify our internal bodily functions and external behaviors, it blurs the lines between human and machine, and between authentic choice and programmed response. This raises critical philosophical questions about free will, personal identity, and the nature of human autonomy in an age of algorithmic influence.

The ultimate trajectory of this technology points toward the creation of a "choice architecture" at a societal scale. In behavioral science, choice architecture refers to the practice of designing the context in which people make decisions to influence their choices. When this principle is applied globally, powered by the pervasive data collection of IoT and the predictive power of AI, it moves beyond influencing individual purchases. It becomes a tool for architecting the decision-making environment for entire populations. This could be wielded to steer society toward laudable collective goals, such as improving public health outcomes or promoting environmental sustainability. However, it could equally be used as a powerful instrument of social control, creating a future where individual behavior is subtly and persistently managed, and deviation from an algorithmically-defined norm becomes increasingly difficult. This represents a new and potent form of governance that operates at a subconscious, data-driven level, the implications of which we are only beginning to comprehend.

VII. Strategic Recommendations and Conclusion

The Internet of Behaviors, supercharged by Artificial Intelligence, stands as a technology of profound duality. It holds the potential to unlock immense value by creating more efficient, responsive, and personalized systems across every sector of society. Simultaneously, it presents unprecedented challenges to cybersecurity, privacy, and the fundamental principles of human autonomy and fairness. Its development is not merely a technical matter but a societal one, demanding a proactive and collaborative approach to governance. This final section synthesizes the report's analysis into a set of strategic recommendations for key stakeholders and offers a concluding perspective on the path forward.

7.1 Recommendations for Policymakers and Regulators

Governments and regulatory bodies have a critical role to play in establishing the guardrails that will ensure IoB technologies develop in a manner that is safe, ethical, and aligned with democratic values.

Move Beyond Data Privacy to Algorithmic Accountability: Existing data protection laws are a necessary but insufficient foundation. Regulators must develop new frameworks that focus on the outcomes and impacts of IoB systems, not just the data inputs. This should include mandating transparency in how algorithms make decisions (explainability), requiring regular, independent audits to detect and mitigate bias, and establishing clear lines of legal accountability for harms caused by algorithmic systems.

Establish "Red Lines" for High-Risk IoB Applications: Not all applications of IoB are acceptable in a free and open society. Policymakers should engage in a broad public dialogue to identify and prohibit the use of IoB in specific high-risk domains. This could include a ban on using behavioral data for manipulative political advertising, the creation of generalized social scoring systems by governments, or discriminatory uses in access to essential services like housing, employment, and insurance.

Empower Users with Meaningful Control and Data Literacy: Regulation should move beyond lengthy and complex privacy policies that few users read. This means mandating the development of user-centric data management dashboards that provide simple, granular control over how behavioral data is collected and used. Furthermore, public education initiatives should be funded to improve data and algorithmic literacy, empowering citizens to make more informed decisions and understand the systems that are shaping their choices.

7.2 Recommendations for Business Leaders and Technologists

The private sector is the primary driver of IoB innovation, and thus bears a significant responsibility for its ethical development and deployment. Adopting a posture of responsible innovation is not only an ethical imperative but also a long-term business necessity for maintaining customer trust.

Embed Ethical AI and Privacy by Design into the Development Lifecycle: Ethical considerations cannot be an afterthought. Organizations must integrate principles of fairness, accountability, transparency, and privacy into the entire product development process, from initial conception to final deployment. This involves creating diverse development teams, actively seeking to de-bias training data, and building privacy-preserving features into the core architecture of IoB systems.

Prioritize Robust Cybersecurity as a Core Business Function: Given the extreme sensitivity of behavioral data, the security of IoB systems must be treated as a top-tier business risk. This requires investing in state-of-the-art security practices, including end-to-end encryption for all data, strong multi-factor authentication, regular penetration testing, and a proactive threat monitoring and response capability. A single major breach of behavioral data could be an extinction-level event for a company's reputation.

Foster Trust Through Radical Transparency and Clear Value Exchange: The long-term viability of IoB business models depends on user trust. To earn and maintain this trust, companies must be radically transparent about what data they collect, how that data is used to make inferences, and what behavioral outcomes they are seeking to achieve. The value proposition must be clear and compelling for the user; individuals will only willingly participate in IoB ecosystems if they receive tangible, significant benefits in return for their data, and feel they are partners in the process rather than subjects of it.

7.3 Conclusion: Balancing Innovation and Human Dignity

The Internet of Behaviors, powered by Artificial Intelligence, is not an incremental technological step. It is a transformative force with the potential to fundamentally re-architect the relationship between individuals, institutions, and technology. It presents society with a critical choice: to pursue innovation at all costs, potentially creating opaque architectures of surveillance, manipulation, and control; or to guide this innovation with intention, building systems that are transparent, accountable, and ultimately serve to empower individuals and enhance collective well-being.

Navigating this choice successfully requires a paradigm shift in governance, moving from a reactive posture that addresses harms after they occur to a proactive one that anticipates risks and embeds values into technology by design. It demands a deep and sustained commitment to ethical principles from the technologists and business leaders building these systems. Most importantly, it requires an urgent and inclusive public dialogue about the kind of data-driven future we collectively want to inhabit. The ultimate goal must be to harness the undeniable power of the Internet of Behaviors to serve and elevate core human values—autonomy, fairness, and dignity—not to subordinate them to the logic of the algorithm. The path forward is complex and fraught with challenges, but by prioritizing responsible stewardship, we can strive to ensure that this next technological revolution enhances, rather than diminishes, what it means to be human.